I think the chances of AI taking over Humanity next iteration are 50%.

Our Human species is complex! Our ambition as humans is filled with dualities that cross over epic capacities of doing good and better but also vanity, greed, anxious belligerent competitive nature, something that most of times destroys our kind, utopian, compassionate constructing DNA.

As creators we humans created the most advanced empires in history and changed Planet Earth irrevocably. Our biggest creation to date was Artificial Intelligence. We are in the process to make it Augmented General Intelligence. The questions we face now as species are:

• Is our Creation leading us as a species to extension?

• Are we doing enough to manage our AI Metaverse data narrative?

Artificial intelligence (AI) is by far our most advanced creation. Together with the Fourth Industrial Revolution array of technologies we are building smart cities and the most advanced society ever and foundational revolutionary shifts that we operated are pushing us further than in the 30 thousand years of Human Sapiens.

AI is and can be used in many areas of the arts, including in music, visual arts, and literary arts. And still in its inception is making us now question what is to be human.

AI-generated arts or music can be composed and performed in a variety of ways, such as through deep learning techniques, generative models, and AI-assisted composition tools. AI-generated visual art can be used to create original pieces of art, as well as to provide creative tools for artists. AI can also be used to generate literature, such as novels, poetry, and advanced language models prose. AI can be used to analyse, change and shift existing language how we do works of art, can and is being used to identify trends, styles, and topics to inform new works. AI can also be used to create interactive experiences, such as art installations and performances.

The major challenge — and opportunity — is how AI will likely lead to an exponential enrichment of Humanity — extension of human capabilities- , as AI is used to help humans make decisions, automate tasks, and create new technologies.

We Humans are now with AI in the crossroad of two roads:

• A road highway where AI could lead to a new evolutionary stage, as AI and humans merge and evolve together. This is not something that is likely to happen in the near future, but it is something that could potentially be realised in the longer term.

• A sinister road where AI amplifies the worst of Humanity and leads us to disaster as history keeps remind us. The myths of Icarus and Pandora Box are now too small to realise the scale of the challenges with AI and AGI inceptions.

I asked this question to the Open AI GPT-3:

How can humans and AI co-exist and evolve together?

The Open AI chat answered this:

Humans and AI can co-exist and evolve together by working together to create technologies that improve the lives of both humans and AI. AI can help humans by automating tasks, such as data analysis and decision-making, and humans can help AI by providing insight and guidance to help AI make the best decisions. Additionally, humans and AI can collaborate to develop new technologies that can benefit both humans and AI, such as AI-assisted medical diagnosis and treatments.

We are moving to an emergent AI-sentient data-driven technology. The way the Open AI answered me was “AI can improve the lives of humans and AI”. This emergent AI is the first form of artificial intelligence (AI) that is data-driven and has the ability to learn and evolve on its own. Most of our social media platforms are already using some of these softwares and solutions. At the moment Emergent AI technologies can be used in multiple variety of ways, such as analysing data, making decisions, and automating tasks. Emergent AI is capable of making decisions and taking action autonomously and can be used to develop new technologies and applications.

The big elephant in the room is how humans can cope with this evolution of humanity. Special as humanity is going through a digital overdose and digital obesity to quote futurist Gerd Leonhard.

“Digital obesity is basically about overconsuming (of information), not giving enough rest. It is basically the same as constantly eating, never doing anything else,” futurist Gerd Leonhard at a forum organized by the Turkish Education Association.

To finish a powerful quote that should make us think about the challenges of AI and AGI with the emergence of GPT-3:

“I think GPT-3 is artificial general intelligence, AGI. I think GPT-3 is as intelligent as a human. And I think that it is probably more intelligent than a human in a restricted way… in many ways it is more purely intelligent than humans are. I think humans are approximating what GPT-3 is doing, not vice versa.”

— Connor Leahy, co-founder of EleutherAI, creator of GPT-J (November 2020)

Humanity AI Crossover

Humanity is in an advanced major crossover. Our unique differentiator as nature is our brain. The human brain is what has made humanity the leading species in the earth planet ecosystem. The brain is the most complex and capable nature thinking machine and in the scale of thousand of years of evolution it has created our unique intellectual property. Human beings — as species are not particular very strong, not very fast, and not resilient — when it comes to the planetary food chain. The challenge now is as we grow our evolutionary new brains with AI are these new augmentation becoming part of us or as so many wild animals moving toward extinction

As humanity develops advanced artificial intelligence, humans will need to re-design themselves at an ever increasing rate. Humans, so far have been designed in slow biological evolution. That will change with AI’s new evolutionary path where human engineering fabrics will redesign their DNA as they advance in parallel with AI and robotics new paths.

This together with spatial computing, IoT and sensors that will create entire digital twins, avatars of all of us, our cities and our society.

Humanity has to understand how its imagination and AI can walk side by side. You need human vision and technology desire and respective sufficient data to expand our human will and augment human design engineering.

Imagination carry us to visible and invisible worlds that we never visit before, even without the need to go nowhere!

However Imagination is no longer a privilege of the human mind. AI has changed the rules of the game.

Imagination has a direct application of a magic delivery: guessing the representation of a subject in a different way, or in other words, translating an image from one representation to another. AI have to serve Imagination not the other way around Edge computational AI is augmenting data and the limits of humanity & imagination.

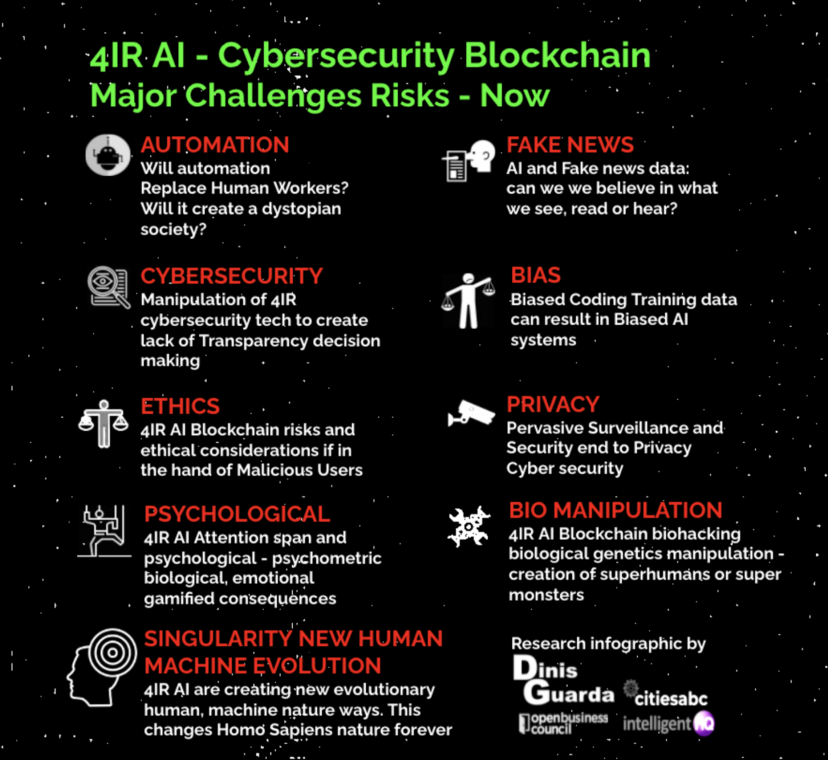

But with imagination we are facing major challenges in our technological iterations. Special when it comes to our 4IR challenges and risks we face now:

• automation;

• Cybersecurity;

• Ethics;

• Psychological;

• Fake News;

• Bias;

• Privacy;

• Bio manipulation;

• Singularity new human machine evolution.

Will AI become Humanity Extension and a new Human Fast Forward Evolution?

AI researchers say there’s a 10% chance their work will lead to humans extension

AI is here and although only was something that started in a more advanced way in the 1940s, researchers have been obsessed in what would become the artificial intelligence field. And our human ambition leads us to the biggest vanity and ambitious. Since the inception of AI we have began toying with the tantalising idea: What if we designed computer systems through an approach that’s similar to how the human brain works?

Our minds are made up of neurons, which send signals to other neurons through connective synapses. The strength of the connections between neurons can grow or wane over time. Connections that are used frequently tend to become stronger, and ones that are neglected tend to wane. Together, all those neurons and connections encode our memories and instincts, our judgments and skills — our very sense of self.

Nearly half of AI researchers say there’s a 10% chance their work will lead to humans extension. As a AI developer I increasingly believe the chance will be more than 50%.

“AI is probably the most important thing humanity has ever worked on. I think of it as something more profound than electricity or fire.” Sundar Pichai

The potential of AI will grow exponentially, and it’s the perils are becoming much harder to ignore. Former Google executive Mo Gawdat tells the story of how he became concerned about general AI like this: robotics researchers had been working on an AI that could pick up a ball.

“It’s taken me a while to get my mental and emotional arms around the dramatic implications of what I see for the future [in AI]. So, when people have never heard of ideas along these lines, and hear about it for the first time and have some superficial reaction, I really see myself some decades ago. I realise it’s a long path to actually get comfortable with where the future is headed.”

— Dr Ray Kurzweil, Transcendent Man documentary (2009, watch)

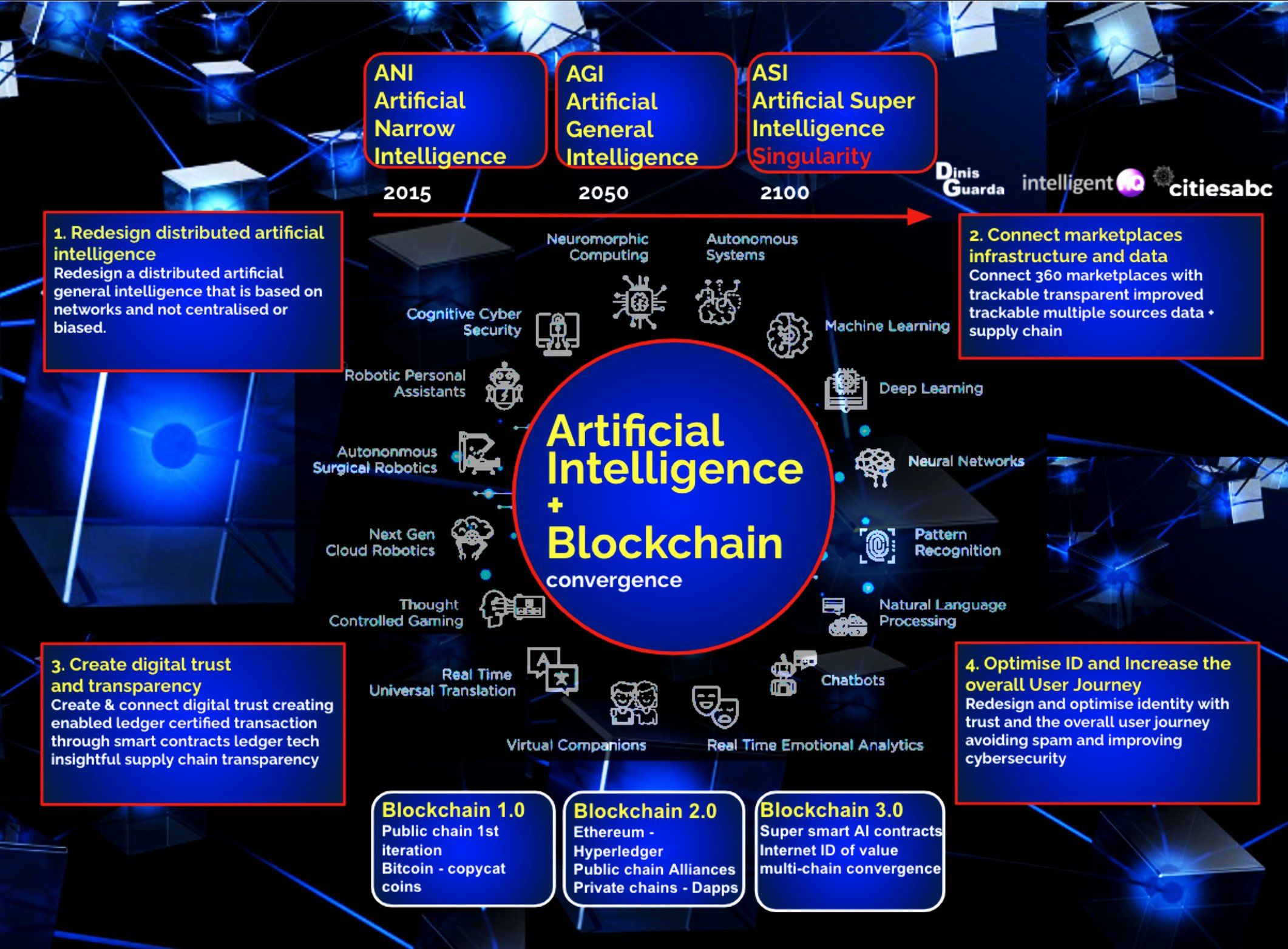

AI is going through stages of development and like human own evolution is not linear and interdependent of the 4IR set of technologies and special capacity to create secure ID of data and ID using digital smart contracts blockchain solutions.

I did this infographic to look at the multiple iterations of AI:

• ANI — Artificial Narrow Intelligence

• AGI — Artificial General Intelligence

• ASO — Artificial Super Intelligence

The infographic highlight how these notions can redesign our models and connect our digital platforms, marketplaces and create trust and digital transparency. Moreover I look at how AI can be a convergence with Blockchain tech:

Technology is the element that we can control until a certain point.

The challenge is our very own Humanity. After many failures, humanity will merge with AI or worse be replaced by AI… The reality now is that we opened a new Pandora Box, much bigger than ever in our human history, or we’re creating a new evolutionary HumanAI God or Demon?

Open AI, Open Cog — a Journey towards a better Human Advanced Singularity or Human Extinction?

“The biggest implications of the singularity are that we don’t know the implications of the singularity”

— Dean Kamen, inventor of the Segway and iBOT, Transcendent Man documentary (2009, IMDb)

How do we design our new Evolutionary road map as Humanity? Enter XXIth century and we are still full of the contradictions that makes us dangerous for our own planet and our species.

I want to highlight the narrative around Open AI and Open Cog.

OpenAI is now shifting how we perceive AI. OpenAI is the leading world artificial intelligence (AI) research laboratory consisting of the for-profit corporation OpenAI LP and its parent company, the non-profit OpenAI Inc. The company includes the following key personalities:

•CEO and co-founder: Sam Altman, former president of the startup accelerator Y Combinator, President and co-founder: Greg Brockman, former CTO, 3rd employee of Stripe, Chief Scientist and co-founder Ilya Sutskever, a former Google expert on machine learning, Chief Technology Officer: Mira Murati, previously at Leap Motion and Tesla, Inc., Chief Operating Officer: Brad Lightcap, previously at Y Combinator and JPMorgan Chase

Other relevant backers of the project include:

• Reid Hoffman, LinkedIn co-founder, Peter Thiel, PayPal co-founder and Jessica Livingston, a founding partner of Y Combinator

The company conducts research in the field of AI with the stated goal of promoting and developing friendly AI in a way that benefits humanity as a whole. The organisation was founded in San Francisco in late 2015 by Sam Altman, Elon Musk, and others, who collectively pledged US$1 billion. Musk resigned from the board in February 2018 but remained a donor. In 2019, OpenAI LP received a US$1 billion investment from Microsoft. OpenAI is headquartered at the Pioneer Building in Mission District, San Francisco.

OpenCog is in another hand a project that aims to build an open source artificial intelligence framework. OpenCog Prime is an architecture for robot and virtual embodied cognition that defines a set of interacting components designed to give rise to human-equivalent artificial general intelligence (AGI) as an emergent phenomenon of the whole system.[2] OpenCog Prime’s design is primarily the work of Ben Goertzel while the OpenCog framework is intended as a generic framework for broad-based AGI research.

Research utilising OpenCog has been published in journals and presented at conferences and workshops including the annual Conference on Artificial General Intelligence. OpenCog is released under the terms of the GNU Affero General Public License.

OpenCog is in use by more than 50 companies, including the likes of Cisco

How is OpenCog different from OpenAI? by Ben Goertzel

OpenCog is a few things: A software framework designed for the interoperation of multiple cognitive processes on a common weighted, labeled hypergraph knowledge store (the Atomspace)

A project aimed at building a human-level (and more) AGI based on the design given in the book “Engineering General Intelligence vol. 2” by Goertzel, Pennachin and Geisweiller …

A community of people, and a nonprofit foundation, oriented toward the above

OpenAI is much better funded than OpenCog currently, and is also currently focused on deep neural networks. OpenCog also has a deep neural net aspect (there is experimental work going on in Hong Kong and Ethiopia right now, integrating deep NNs for perception into the AtomSpace), but it’s not the central aspect of the architecture or project.

These together with companies like Google Brain, DeepMind’s AlphaFold platform, have started to solve one of life’s great mysteries: the protein folding problem.

AlphaFold artificial intelligence program developed by DeepMind, a subsidiary of Alphabet, performs predictions of protein structure. The program is designed as a deep learning system. AlphaFold AI software has had two major versions and the company is now able to accurately predict the three-dimensional shape of a protein based solely on its one-dimensional amino acid sequence, a landmark achievement that had eluded human researchers for decades. Researchers argue that AlphaFold represents the single most important achievement in the history of artificial intelligence.

In July 2021, DeepMind open-sourced AlphaFold and released a database of 350,000 three-dimensional protein structures. (As a reference point, the total number of protein structures known to mankind prior to AlphaFold was around 180,000.) Then, a few months ago, DeepMind publicly released the structures for another 200 million proteins — nearly all catalogued proteins known to science.

The scale of this operations will have immense impact in healthcare and biology. Months after DeepMind’s latest release, there are more than 500,000 researchers from 190 countries using the AlphaFold platform to access 2 million different protein structures. Breakthroughs of AlphaFold’s magnitude require years for their full impact to manifest.

“If you want to get the best results on many hard problems, you must use deep learning,” Ilya Sutskever — cofounder of OpenAI, which produced the text-generating model GPT-3 and the image-generator DALLE-2, among others — 2019.

How do we design AI and our own evolutionary new iteration when we are so full of issues that go from emotional, psychological, bipolarity and aggressively, plus bullying and worst killing instincts?

Humans and our brains are complex and deadly. Our instability is old as our species.

To design new artificial intelligence systems we have to look at the nature of our language(s). Our languages are the most advanced intelligence paradigm of what makes us humans and our somehow inner designed organic evolutionary evolving algorithm.

When we think we process intelligence and patterns of thinking, mostly designed on us by the tribes we are part of. These patterns are now replicated with advanced software like GPT-3 that replicate the designed generalised way, meaning the algorithm patterns replicating generic design of language can answer to our thinking and can increasingly do things outside what they were trained to do. This partly because they don’t have the organic emotional additional layers that humans have with their body metabolisms and adaptation towards their ecosystem, weather, desires, frustrations and memories.

The new AI designing is highly competent, and using rationality and generic language processing can and will be increasing beating human limitations. This opens much doors of possibilities and other approaches in terms of performance based on the benchmarks machine learning (ML) that researchers are increasingly using to evaluate new systems.

The patterns of data and language, (all the books of humanity are some researchers estimate around or less than 200 million) that we are feeding our human built created AI are not infinite. The challenge is when our AI creation will process all of this data. That scientifically is not that difficult. And how can we do with the “scalable” element when building AI.

Scalability is the challenge in the technological level as if data in the new oil is an oil that will be extracted sooner than we think. For AI for which this concern is most pressing is language models data sources research efforts like DeepMind’s Chinchilla work have highlighted that the most effective way to build more powerful large language models (LLMs) is not to make them larger but to train them on more data.

However the language data is there in the world is not endless. According to one research group, the world’s total stock of high-quality text data is between 4.6 trillion and 17.2 trillion tokens. This data list includes all the world’s books, all scientific papers, all news articles, all of Wikipedia, all publicly available code, and much of the rest of the internet, filtered for quality (for e.g., the total of the one billion webpages, respective quality blogs, and all our major social media platforms). Another recent estimate puts the total figure at 3.2 trillion tokens.

So the reality is that for AI DeepMind’s Chinchilla model that was trained on 1.4 trillion tokens will be fast to process all of this.

In other words, we may be well within one order of magnitude of exhausting the world’s entire supply of useful language training data in a near future.

After this researcher will be looking at one new possible solution synthetic data, though the details about how to build these models and scale and operationalise this are far from clear.

Another recent idea highlights the possibilities of building systematically transcribing the spoken content of the world’s meetings (after all, oral traditions, animal languages, spoken discussion represents vast troves of text data that today go for now still captured).

Another element we are pushing forward is the construction of humanoid robot that are fast becoming a reality. This and the usage of AI in healthcare and biological systems.

From a business model is straight forward you keep throwing more money and more data into these language processing neural networks — make it bigger, spend longer on training it, harness more data — and it does better, and better, and better. The problem is when the speed of processing passes humans.

This is where we reach some type of singularity. But the challenge with singularity as with Humanity DNA is that we don’t have 2 humans alike and our humanity is as chaotic as harmonic or more chaos than rationality if we look at history.

No one has ever discover what makes us Humans. From old Mesopotamia, Egypt to the Greeks with Socrates, Plato and the Romans Stoicism the philosophical questions remains.

When we apply this to AI we are looking at biology, science, engineering, technology and business. These models need of course language and neural networks that are part of the roots of language history and philosophy.

AI and special AGI are touching the very essence of humanity. And if yet to be discovered the limits of this principle, technology evolution is already passing human perception philosophical evolution. And we have to be realistic the major tech companies need profit in a society that never stops pushing itself in a competitive and in many cases with war games litigation ways. For technology and science the more you put in, the more you get out. But for humanity and nature there is a need to balance and harmony.

AI and AGI models need also technological power new energy systems and that breathless energy that pervades so much of AI right now is part of the very major challenges we face as human society and civilisation.

The major question we have to define when it comes to AI, AGI and the very notion of Singularity is not simply what they can do, but where they’re going. And special what we are going to do about it?

The narrative is simple we are parents of a child call AI. This child is passing our very own ways of thinking and processing intelligence in its inception. As it evolves and grows the child will want to “kill the father”.

Now the challenge is how we manage this old psychological complex. I asked Open AI GPT-3 to describe the “kill the father” complex. This is “his” description:

The “kill the father” complex is a psychological term used to describe a person’s unconscious desire to overthrow an authority figure in their life, usually a parent or mentor. This complex is usually seen in children who are in the process of developing their own sense of autonomy and independence. The “killing” of the father can be literal or symbolic, such as rejecting the values and ideas of a parental figure or rejecting their authority. This complex can be seen as a normal part of growing up and developing an identity separate from one’s parents or authority figures, but can also be seen as a sign of psychological distress if the desire to overthrow a parental figure is taken to extreme levels.

Again when I asked Open AI GPT-3 what are the major AI ethics we should consider, the answer is this?

• Data privacy: AI systems should not access data without permission, and should not use it for any purpose other than what it was originally intended for.

• Fairness: AI systems should not discriminate against any individual or group based on race, gender, age, sexual orientation, or other protected characteristics.

• Transparency: AI systems should be open and transparent about how they make decisions, so that users can understand and trust the system.

• Explainability: AI systems should be able to explain their decisions, so that users can understand why the system made certain decisions.

• Safety: AI systems should be designed to be safe and secure, and not cause any harm to the user.

• Accountability: AI systems should be held accountable for any mistakes they make, and those responsible should be held accountable for any harm caused by the system.

But we know is much more than these 6 elements. AI and AGI are over writing our evolutionary path as humans and open new ways, highways or sinister roads.

Some of us are aware of this but the very truth is that 99% of humanity is more focused on going on with their lives in many cases in survival mode even if in statics level we live the best stage of human evolutionary social health and economic levels.

“Humans think in stories rather than in facts, numbers, or equations, and the simpler the story, the better.” — Yuval Noah Harari

AI think rational facts, numbers, or equations. At this stage I want to highlight the old stoics from Greece and Roman:

“First learn the meaning of what you say, and then speak”. Epictetus

“The more we value things outside our control, the less control we have.” Marcus Antonio

“Cease to hope and you will cease to fear.” Seneca

“Only time can heal what reason cannot.” Seneca

“Death smiles at us all, but all a man can do is smile back.”

The narrative of Humanity is in our hands.

“Questions you cannot answer are usually far better for you than answers you cannot question.”— Yuval Noah Harari

It is up for us to make better questions about what we want as Human species and how we work as society? Our space time of action as humans is limited.